FERPA and AI Compliance in Higher Education: A Walkthrough of the Four Edge Cases

- David Holstein

- 2 hours ago

- 12 min read

TLDR: FERPA and AI compliance in higher education sits at an underexamined intersection. FERPA was written for a paper-records world and last meaningfully amended in 2008. AI agents reading PDFs, combining classifications, shaping institutional decisions, and generating new records present four edge cases the statute does not directly address. This piece walks through each edge case using a real Bettera-built Student Agent processing a medical certificate as the worked example. The four edge cases: the school officials exception applied to AI agents, the directory information combination question, decision-making AI, and the right of access for AI-generated records. Each gets framed as a question for counsel, illustrated by what the agent actually does.

FERPA and AI compliance in higher education sits at an intersection most institutions have not seriously worked through, even as the AI capabilities that create the compliance questions are already running in their systems.

Per ServiceNow's April 9, 2026 commercial model announcement, AI is now bundled across every tier of every ServiceNow solution. Institutions running ServiceNow have Now Assist capabilities active in their instance whether or not they have formally announced an AI deployment. The compliance posture has to catch up with what is deployed.

FERPA, last meaningfully amended in 2008, was written for a world of paper records and human school officials. The 2024 PTAC guidance on AI is helpful but limited in scope. The areas where AI agents create new questions remain largely unaddressed by federal regulation, state privacy law, or institutional policy.

We are not lawyers, and what follows is not legal advice. What we are is the only ServiceNow consulting partner exclusively focused on higher education, and we have walked R1 institutions through the FERPA conversation that has to happen before any AI agent touches educational records at scale.

Four edge cases require counsel's attention. The school officials exception applied to AI agents. The directory information combination question. The decision-making AI question. The right of access for AI-generated records. This piece walks through each one using a real Bettera-built Student Agent processing a medical accommodation request as the worked example. Each edge case gets the statute summary, the operational reality the demo illustrates, and the question to raise with counsel.

If your institution is scoping AI deployment and you have not yet had this conversation with your General Counsel, this is the framework we walk institutions through. Forward to counsel directly if helpful.

Why FERPA and AI compliance in higher education needs a fresh read

Three structural reasons FERPA needs to be re-examined now.

First: FERPA was enacted in 1974 and last meaningfully amended in 2008. The statute predates the data-rich AI era by decades. Concepts central to AI governance, model training on educational records, agent-to-agent data passage, AI-generated records about students, automated decision-making, are not addressed in the statute or in 34 CFR Part 99, the implementing regulations.

Second: PTAC guidance is helpful but incomplete. The US Department of Education's Privacy Technical Assistance Center has published guidance on student privacy in technology contexts including a 2024 update touching AI considerations. The guidance is operationally useful but consciously declines to issue formal interpretive rulings on the AI edge cases. PTAC defers to institutions to work through the application of FERPA to specific AI use cases with their own counsel.

Third: AI capability has accelerated faster than policy can respond. The April 9, 2026 ServiceNow commercial model change bundled AI across every product tier, and the Knowledge 2026 announcements expanded the AI Control Tower product family substantially. Institutions running ServiceNow at any tier have generative AI capabilities active in their instance now. The compliance posture conversation cannot wait for federal rulemaking. Institutions need a defensible working framework before the first FERPA complaint involving an AI-shaped decision lands on the Provost's desk.

These three factors create the practical situation in 2026: the AI is deployed, the institutions need defensible posture, and counsel is being asked to advise on questions the statute does not directly address. The four edge cases below are where these questions concentrate.

A guardrail before we walk through them. Bettera is not a legal advisor. The discussion that follows surfaces operational realities for counsel's review, not legal conclusions. Every institution should validate the application of FERPA to its specific AI deployments with its own General Counsel and external privacy counsel as needed.

Meet the worked example: a real AI agent processing a real student record

What follows is a 43-second demonstration of a Bettera-built Student Agent processing a medical accommodation request. The student is sick. A medical certificate from an outside physician arrives. The AI agent reads the certificate, extracts the diagnosis and recommended accommodation, cross-references the student's academic record, and asks the student to confirm the agent's interpretation before proceeding. Watch first. The four edge case sections below walk through the FERPA questions the scenario raises.

Bettera Agent in Action

The sick student, the medical certificate, and a real Bettera AI agent processing a real accommodation request. Watch what the agent does with educational record data.

The four edge cases the demo just illustrated:

The agent acted on the institution's behalf, reading a third-party document about a student.

Edge case 1: school officials exception applied to AI.

The agent combined the student's name with the academic record with a medical diagnosis and a recommended accommodation.

Edge case 2: directory information combined with protected data. The agent generated an accommodation recommendation, shaping an institutional decision.

Edge case 3: decision-making AI.

The agent created a new artifact, its interpretation, which is now a record about the student.

Edge case 4: right of access for AI-generated records.

The next four sections walk through each one in detail.

Edge Case 1: The school officials exception applied to AI agents

What the statute says. FERPA's school officials exception (34 CFR § 99.31(a)(1)) permits institutions to disclose educational records to school officials with "legitimate educational interests" without student consent, provided the institution has specified its criteria for who qualifies as a school official in its annual FERPA notification. The exception is the operational backbone of how educational records move within an institution.

What the demo illustrates. The Bettera Student Agent is operating on the institution's behalf, accessing the student's academic record, and processing the medical certificate. It is functioning as a school official in operational reality. The agent has institutional permissions, defined scope, and an audit trail of every action it takes. But it is software, not a human.

Where the question gets harder. External AI agents (Microsoft Copilot, Anthropic's Claude, an institution's homegrown agents) operating on the same data via the Model Context Protocol or other interoperability standards raise the question more sharply. These agents are not directly operated by the institution. They are commercial AI products the institution has procured. Does the school officials exception apply to them too? PTAC has not formally addressed it. AACRAO's FERPA guidance does not address it directly.

The question to raise with counsel. Has the institution's annual FERPA notification been updated to include AI agents (both directly operated and externally procured) in its definition of school officials? What contractual provisions need to be in place with external AI vendors for the school officials exception to apply defensibly? If a student or parent contests an AI agent's access to educational records, what is the institution's documented position?

Edge Case 2: The directory information combination question

What the statute says. FERPA permits institutions to designate certain categories as directory information (typically name, address, phone, email, dates of attendance, major) that can be disclosed without consent unless the student has opted out. Records that are not directory information (academic records, financial records, disciplinary records, medical accommodation records) require either consent or an applicable exception for disclosure.

What the demo illustrates. The Bettera Student Agent generated a derived artifact that combined the student's name (directory information) with the medical certificate content (potentially educational record, ADA-relevant, possibly HIPAA-adjacent) with the student's academic record (clearly educational record). The combined output is a new record containing multiple FERPA classifications.

Where the question gets harder. PTAC guidance on directory information predates the AI era. The traditional rule, that combinations may be governed by the strictest applicable classification, was articulated for human-generated combinations. Whether the same rule applies to AI-generated derived content has not been formally settled. The institution likely should treat the combined output as governed by the strictest applicable classification, but the operational implications are significant. Every AI-generated summary, every confirmation message, every audit log entry potentially carries the strictest classification of any input data.

The question to raise with counsel. When the institution's AI agents generate derived content combining multiple FERPA classifications, which classification governs the output? Does the institution's data classification overlay handle this combination logic automatically? What is the disclosure posture if the combined output is requested via subpoena, FOIA, or student records access?

Edge Case 3: The decision-making AI question

What the statute says. FERPA was written assuming institutional decisions are made by humans applying institutional policy to educational records. The statute provides procedural protections (right of access, right to amend, hearing procedures) that presume a human decision-maker is accountable for the decision.

What the demo illustrates. The Bettera Student Agent generated an accommodation recommendation. The agent's interpretation of the medical certificate, combined with its cross-reference of the academic record, produced a structured recommendation that will shape what the institution does next. Even if a human accommodation coordinator reviews the recommendation before acting on it, the AI participated in the decision.

Where the question gets harder. When an AI agent triages, routes, prioritizes, or recommends, is the institution's FERPA disclosure posture the same as when a human did the same work? PTAC has touched on automated decision-making generally but has not issued formal guidance on the FERPA-specific implications. Some state laws (CCPA, the EU AI Act, and others) are beginning to require disclosure of automated decision-making to data subjects. FERPA does not yet require it. Whether FERPA's right-of-access provisions extend to the AI's decision logic itself is unsettled.

The question to raise with counsel. When the institution's AI agents shape decisions about students (admissions triage, advising recommendations, financial aid routing, accommodation processing, conduct case prioritization, retention outreach), does the institution have a documented disclosure posture? Are students notified that AI participates in decisions affecting them? Does the institution preserve the AI's reasoning trace as a record the student could request to inspect?

Edge Case 4: The right of access for AI-generated records

What the statute says. FERPA grants students the right to inspect and review their educational records (20 USC § 1232g(a)(1)(A)). The definition of educational records includes records that are "directly related to a student" and "maintained by an educational agency or institution." It is a broad definition by design.

What the demo illustrates. The Bettera Student Agent generated multiple new artifacts during the 43-second scenario: an interpretation summary, a confirmation prompt, a decision trace, audit logs, token-spend records, and a structured accommodation recommendation. Each artifact relates directly to the student. Each is maintained by the institution (in ServiceNow's AI Control Tower instrumentation and the underlying database). Each likely qualifies as an educational record subject to the student's right of access.

Where the question gets harder. AI agents generate enormous volumes of data. Embeddings, vector representations, intermediate reasoning steps, model outputs that were considered and rejected before the final recommendation. Some of this is technical infrastructure. Some is substantive interpretation of the student's record. Where the line falls between infrastructure and educational record subject to access is not formally settled. Institutions that take an expansive view will preserve and produce more on demand. Institutions that take a narrow view will produce less but face more risk if challenged.

The question to raise with counsel. Of the new record types generated by AI agents operating on educational records, which qualify as educational records under FERPA? What does the institution preserve, for how long, and what does it produce on a student records access request? Is the institution's records retention policy updated for AI-generated artifacts?

Building defensible institutional posture across all four edge cases

Five operational moves that address all four edge cases at once. Drawn from the four-layer institutional configuration framework we walk through in our piece on the ServiceNow AI Control Tower for higher education, with the FERPA classification overlay as the central focus here.

1. Update the institution's annual FERPA notification to address AI agents explicitly. Both directly operated and externally procured. Specify the criteria under which AI agents qualify as school officials with legitimate educational interest. This is the cheapest move and the one with the largest defensive value if challenged.

2. Configure the FERPA classification overlay to handle combined-classification outputs automatically. When AI agents generate derived content combining multiple classifications, the overlay applies the strictest applicable classification to the output. This becomes platform-enforced rather than policy-hoped-for. The institution's data classification system does the work, every time, by configuration.

3. Document AI decision-making participation in any process affecting students. A clear disclosure (in advising, admissions, accommodation, financial aid, conduct, retention) that AI agents may participate in shaping recommendations, with named human accountability for the final decision. This positions the institution well against the emerging state-law and international trend toward automated-decision disclosure.

4. Update records retention to cover AI-generated artifacts. Decision traces, interpretation summaries, confirmation records, and reasoning artifacts get retention periods aligned to the underlying decision they shaped. Audit logs get FERPA-aligned retention at minimum. This is where most institutional records retention policies are silent today.

5. Run an institutional FERPA-AI tabletop annually. Counsel, the registrar, the privacy officer, the CISO, and the AI governance owner walk through a realistic AI scenario (the Student Agent demo is a fine starting point) and pressure-test the institution's posture against each of the four edge cases. Annually, at minimum. More often if the institution is actively expanding its AI footprint.

These five moves do not resolve the legal questions. Counsel still has interpretive work to do. But they put the institution in a defensible posture while that interpretive work happens, and they make the institution's posture defensible against any single complaint or audit.

Frequently asked questions

Does FERPA apply to AI agents in higher education?

Yes, when AI agents access or generate records that are directly related to a student and maintained by the institution. The statute's text predates AI, but its definitional reach (educational records directly related to a student and maintained by the institution) covers AI-handled data. The four edge cases above describe where the application requires careful interpretation: school officials exception, classification of derived content, decision-making AI, and right of access for AI-generated records.

What is the FERPA school officials exception and does it apply to AI?

The school officials exception (34 CFR § 99.31(a)(1)) permits institutions to disclose educational records to school officials with legitimate educational interests without student consent. Whether the exception extends to AI agents depends on the institution's specification in its annual FERPA notification and whether the AI agent operates under sufficient institutional control. Directly operated AI agents under defined institutional scope likely qualify; externally procured AI agents reaching in via interoperability protocols raise harder questions that counsel should review.

When AI generates a record about a student, is that record protected by FERPA?

Likely yes if the record is directly related to the student and maintained by the institution. AI-generated artifacts (interpretation summaries, decision traces, accommodation recommendations, confirmation records) typically meet both criteria. The institution should treat these artifacts as educational records for retention, access, and disclosure purposes unless counsel has affirmatively determined otherwise.

Do students have the right to see what AI said about them under FERPA?

Likely yes if the AI's output qualifies as an educational record. FERPA grants students the right to inspect and review their educational records (20 USC § 1232g). AI-generated artifacts that are directly related to the student and maintained by the institution likely fall within this right. Institutions should be able to produce, on demand, the AI's recommendations, summaries, and decision traces about a specific student. Where the line falls between substantive AI-generated content and pure technical infrastructure (embeddings, vector representations, intermediate model outputs) is not formally settled.

What is the FERPA classification overlay for AI agents?

A FERPA classification overlay is a data classification configuration that maps every data element handled by an AI agent against FERPA's specific categories: directory information, educational records, consent-required combinations, and school officials exception context. The overlay enforces FERPA-aligned handling automatically when AI agents access, combine, or generate data. We discuss the overlay as Layer 1 of our four-layer institutional configuration framework in our piece on the ServiceNow AI Control Tower for higher education.

What guidance has the US Department of Education issued on FERPA and AI?

The Department's Privacy Technical Assistance Center has published guidance on student privacy in technology contexts including a 2024 update touching AI considerations. The guidance is operationally useful but consciously declines to issue formal interpretive rulings on the AI edge cases. PTAC defers to institutions to work through the application of FERPA to specific AI use cases with their own counsel. The Department has not yet engaged in formal rulemaking on FERPA-and-AI.

How should higher ed institutions update their FERPA notification to address AI?

The annual FERPA notification should explicitly address whether and under what conditions AI agents (both directly operated and externally procured) qualify as school officials with legitimate educational interests. The notification should also describe the institution's posture on AI-generated records, retention of AI artifacts, and student notification when AI participates in decisions affecting them. The institution's General Counsel should draft the specific language; the five operational moves above are the framework we walk institutions through before counsel drafts.

Where this leaves the institution

FERPA and AI compliance in higher education is the legal conversation every R1 institution running ServiceNow needs to be having with counsel right now. The AI capabilities are already deployed. The compliance posture has not caught up. The four edge cases are observable, named, and addressable. The five operational moves put the institution in defensible posture while counsel does the harder interpretive work.

If your institution is scoping AI deployment and the FERPA conversation has not yet happened with your General Counsel, that is the working session we facilitate at Bettera.

Contact us and we will walk through your institution's posture against the four edge cases together using the FERPA classification overlay framework. Forward this piece directly to counsel if helpful.

Bettera is the only ServiceNow consulting partner exclusively focused on higher education, and the FERPA-and-AI conversation is the single most active legal conversation we are having with R1 institutions in 2026.

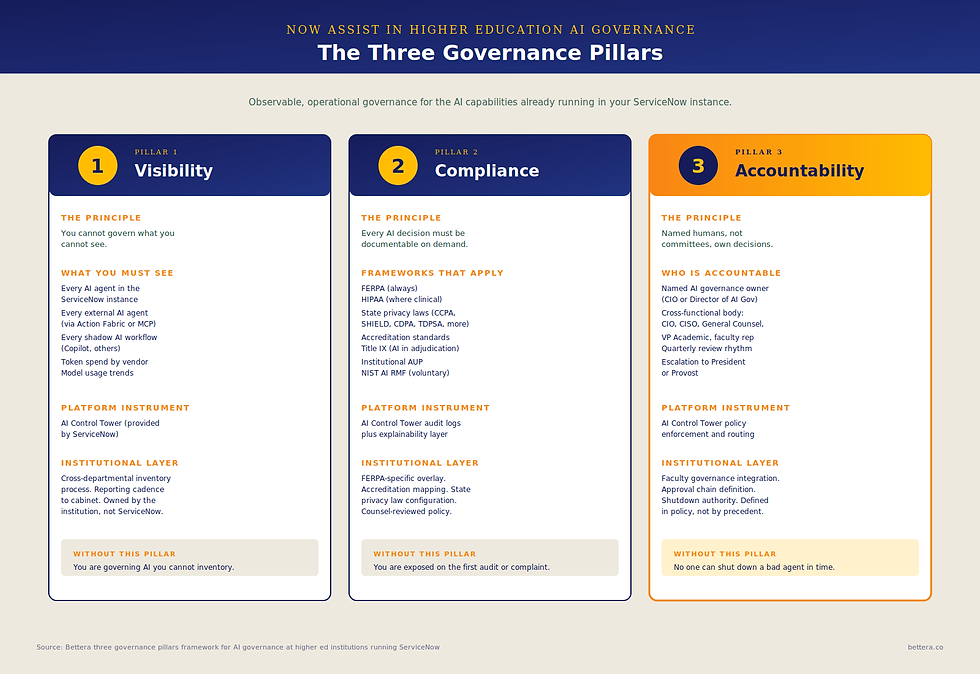

For the strategic context: our piece on Now Assist in higher education AI governance is where the three governance pillars framework and the four FERPA edge cases were first introduced.

Our piece on the ServiceNow AI Control Tower for higher education is where the four-layer institutional configuration framework (including the FERPA classification overlay) gets the deployment walkthrough.